- INTRODUCTION

- LECTURES

- Jüri Soolep / Education In, Of and Off Architecture

- Kazys Varnelis / New Paradigms in the University: Research at the Netlab and Studio-X

- David Garcia / What Exists is a Small Part of What is Possible

- Albena Yaneva / Mapping Controversies in Architecture: A New Epistemology of Practice

- Dr. Nimish Biloria / Info-Matter

- INTERVIEWS

- ESSAYS

- ABOUT

I am happy to be here and to be honest, I was quite impressed when I saw the title of the lecture series, because process is what we focus on in Hyperbody. Hyperbody is a research group at the TU Delft, Netherlands, which is specifically focusing on the intricate processes behind information systems and their linkage with associative material formations in architectural design. In a way, you can also connect what we do with many computational processes, such as evolutionary algorithms, generative design methodologies, etc. Essentially what we focus on is bottom-up computational modes of understanding and gathering heterogeneous data. This allows us to comprehend a site/location as a rich source of data for initiating computational simulations for generating informed spatial entities. The title of the talk today, “Info-matter”, specifically links to the idea of how information systems and material systems have a correspondence. The idea of how this intricate link between information, which is derived from meaningful data sets, is connected with the idea of matter itself.

We usually develop sample/proof of concept projects as 1:1 working prototypes at Hyperbody. Towards this end, we acquired some robotic arms as well and thus besides real-time interactive prototypes, we are now also building fully parametrized 1:1 scale building components. All of this is done at our home lab, called the Protospace Lab. Everything that I am showing in my slides can be completely fabricated and is thus much more than glorified imagery. The idea of departing from model based understanding of space to interactive prototypes is essential for us. This is because we deal with real-time interaction and the ability of space to adapt in real-time to different kinds of dynamic activities and environmental conditions. Such prototypes also involve intensively working with electronic systems, sensing, actuation and control systems, which are embedded within the architectural space/components itself.

Protospace Lab

One of our earlier labs was called the Protospace Lab. The focus of this lab was non-standard parametric architectural entities and how technology and architecture could integrate and co-evolve. The idea of technology becoming an extension of yourself, as a connection with your own neural self is something that is a direction we are actively seeking at Hyperbody. We want to think about technology aiding and assisting us in our everyday lives and thus develop creative approaches to architecture.

Such approaches involve a multi-dimensional mode of thinking. This is because, architecture, as a field, deals with multiple parameters: culture, economy and structure, complexity of space, movement of people, activity patterns, demographics and environmental factors, to name a few. So the way we create relational logics among all these parameters is exactly the point where computation plugs in. When you look at newly designed constructs, you also look at a complexity of form. It is aided by computational tools that we have right now. But the idea of sophistication in terms of the buildability of these forms with utmost precision, is in a way almost complimentary [to all other parameters]. We always talk about CAD and the integration of it, but at the same time we are looking at bottom-up generated computational tools, which transcend the act of developing representational drawings and aid in the development of performative design solutions. The formal expressions which you see in the slides are thus the result of a careful negotiation between computational output and the designer’s own intent.

Another aspect is the idea of real-time interactive space itself. The idea of how architectural space itself can start behaving like a bio-organism, which works in a performative manner with adaptation, both physical and ambient, at its core. These adaptations are connected with the behaviour (physiological and psychological) of people as well as the dynamic environmental conditions within which the architectural space is embedded. Through my talk I shall convey the journey that we, at Hyperbody take, in order to convert our real-time interaction based visions into an architectural reality.

A fundamental shift in our way of thinking about architectural space is extremely vital. We are thinking of architecture from a systems point of view nowadays. Systems are an assemblage of inter-related elements comprising a unified whole, which facilitate the flow of information, matter or energy, a set of entities which interact and for which a mathematical model can often be constructed. The question “what the interconnections and interdependencies between the data sets (in a systemic manner) have to offer for architecture” thus becomes very important.

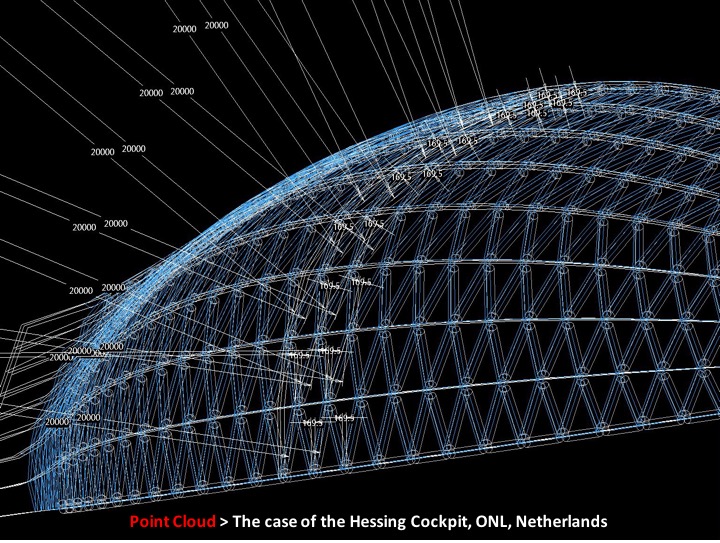

When we are talking about the simultaneous existence of the virtual and the real, we are not talking about just any kind of differentiation, nothing that is virtual is non-real, and nothing real is non-virtual. Everything is interlinked in a way. The question is how the material and the digital, the structural component and the informational component co-exist together, how can these entities intermingle together? But what is interesting, is a middle layer, which, plays an instrumental role in binding these two worlds: a computational world, involving scripting. We are also talking about other abstract phenomena, called “point clouds”, which can be simplistically interpreted as virtual geometric vertices constituting a topology. Linking these virtual entities with physical structural nodes in both parametrically designed static as well as interactive structures is what shall be illustrated further in the lecture.

Why is this so important? Let us look at a project by ONL: “The Acoustic Barrier”. Here you will see that the entire morphology of the project varies throughout 3 kilometres stretch, over which it spans. The constant differentiation in such a formal language includes fluid geometric transitions and is quite difficult to fabricate in a precise manner. If you want to manufacture a project like this, completely with utmost precision, how can you do that? How do computational tools aid you in doing that? Let us understand this process through very simple principles, which will be accompanied with project examples.

The Point Cloud

First, let us try to understand what swarms are. If you look at the video of flocking birds which I am showing now, imagine each of them to be a point in space. These points do not collide with each other, seamlessly follow changing directions and maintain a safe distance from each other. If we were to program this natural behaviour computationally, we would be coding the following three rules per point: separation, alignment and cohesion. So what am I actually doing? I am trying to create a parametric logic between the simplest constituent of geometry: the points/vertices. What does it lead to? Let us look at one of the sections of the Acoustic Barrier project by ONL to understand this.

This section is composed of a complex database of 7000 points. Each and every point has its coded information of separation, alignment and cohesion as its inherent DNA. When I stretch or pull out any point, the entire surface condition (surrounding points) is prone to relationally modify because of the parametric logic, because of how much separation, alignment and cohesion value is embedded inside it. It is a pretty simple rule, but it is quite effective on many levels. Imagine these points now becoming structural nodes of the building. These nodes can also be mined for data such as their three-dimensional location, material differentiation per point, stress and strain going through each point etc. In the case of the barrier, the skin is a three-layered system: a glass panel together with a rubber gasket and a steel section behind it with a perforated sheet. All information pertaining to the size, shape, location and material per layer is basically being mined from the points, between which the triangular surface (glass panel) spans. In this case ONL used MAX-script and AutoLisp to register corresponding data (which I described) in a database and thus acquire exact dimensions of different building component entities (glass, rubber gasket, joining steel sections, perforated metal sheeting) and communicate this to a manufacturing machine.

Why is that so important? As I told you, each and every joint and each and every point is quite complex in such a three-layered system. Every junction would differ and thus every panel is unique. The point cloud structure, which we set up computationally, allows us to automate an otherwise laborious process of identifying various aspects of such a geometry, while allowing one to precisely manufacture it. This level of precision ultimately allowed for a very rapid pace of construction, because every node has one possible connection to which various steel angles (structural members) would will fit into and these are marked specifically on each structural member. The process of production is rapidly progressing. You test prototypes before you build, you also test the structural aspects of a particular part of a building. The construction goes much faster. So the ‘point’ is quite essential and interesting for us, simply because of these three rule sets that are coded into it and the manner in which you start extracting information in this kind of a design system.

Now if we get back to the same ‘point’ and if we talk about the same issues of separation, alignment and cohesion as a programmed rule, what happens when the point becomes actuated? What if every point in every cluster could be pulled in and pulled out? In a workshop that we did in Australia, we were connecting physical sensors together with point sets within a cluster of a geometric entity. We were trying to pull out point sets by physically bending a sensor (which was assigned to a set of points) and understand how the relative points, which were connected with neighbouring points, could start to react. We were trying to develop a relational model connecting the physical and the virtual. Through this small experiment we got the idea to look into interactive environments themselves. We were trying to develop design databases, in which we were dealing with environmental, ergonomic and spatial parameters, tried to work with kinetic, computational and control systems together. All of this aimed to produce smart architecture prototypes.

Muscle Project

Just to give you an example, here is the Muscle project that was exhibited at the Centre Pompidou in 2003. It is an interesting idea of an external form of an object, which can be manipulated because of the manner in which the object can sense the proximity of people. The sensing systems not only sense how fast you approach the body, but also how you touch it. The idea of an emotional response, which is then rated by means in which this body activates. When it gets bored, it starts jumping in order to attract attention so that people might come and play with it. If you approach too fast, then a particular portion starts shivering, in a way, as if it is scared of you.

Muscle Tower Project

From that we went to the Muscle Tower project. We were working with different sets of building components and the nodes that are connecting all of these components together. This project was fully built, manufactured and engineered at Hyperbody. It is a system that has to constantly support and balance itself in real-time. This work is done together with students. We gave them the following assignment: if this was an advertising tower, it had to sense the maximum amount of people that were around it, and therefore try to bend and lean towards them, in a way to forcefully advertise what it wanted to say. You can see multiple stacked sections (as 3D components) in the act of balancing themselves. All of these sections with nodes of points, that are connecting them, have to constantly exchange information, such as xyz location in space, its tilting angles etc. in order to exchange the amount of air pressure which should be circulating in fluidic muscles, which pull these 3D sections in different directions in real-time, so that the structure does not collapse. The bigger picture was that you can create an in-built self-stabilizing mechanism within tall buildings, which could constantly maintain its verticality during movement of tectonic plates in seismic zones.

The Interactive Wall

The Interactive Wall is a project we did with FESTO. It is a bit more complex than the ones we did earlier, it has 3 modalities; an in-built, constantly varying lighting system, it has its own in-built structural logic, it also has a sound source inside. The sound changes depending on the manner in which people interact with this wall. This was a very interesting project for us, as it communicated the principles of what we did throughout 8 years. But in this case, it is three times more complex, because we are working with the changing modality of sound, the sound is connected with the intensity and the display of the lighting patterns. At the same time there was a complex idea of how all of the elements would work together. None of the elements could be allowed to topple over, rather, all of them had to work in synchronization with each other.

These are just some of the examples of simple visions that we wanted to take further. We were talking about surface conditions creating multiple opportunities and other things. There are multiple possibilities, but the core idea is to look at these via their components.

Performative Building Skin Systems

We were working with the performative building skin systems and the idea of their structural, behavioural and physiological adaptations. In this case we started building in a reverse fashion. Rather than starting up with an algorithm, we created a prototype first. The prototype is generated only to create a notational parametric logic. The more I slide a double skin façade system, the more variation it creates in the vertical dimension and the more difference it creates in terms of the amount of light and the amount of air that will be let into the building. But all of this results in generating an algorithmic system, which says: if the Z parameter is so much, then X and Y can become this much. This resulted in a very simple notation process based algorithm generation.

We also did another interesting thing in this project. We wanted to look at how light and water controlling components as opposed to energy generation components could be built into the façade system. What if the skin becomes completely self-sustaining and does not need an external power source? What if the structure, which hosts different components, becomes almost like a standardized system? Computationally, no matter how I change a surface condition, the entire space frame (which hosts such energy related components) can also be regenerated in real-time because of the notational sequences of the points. We were working on a complex information system, in terms of how wind-based power systems would power up the actuators for the solar flaps, as opposed to the water collection units in addition to the entire generative geometry logic for it.

From the external side, the façade, efficiently tracks the amount of light that is being witnessed by this building at different times of the day; therefore the façade dynamically opens or closes in order to maintain appropriate lighting levels in the building and is thus constantly changing. But internally it works on the principle of how many people pass by this façade. If I walk by the façade, the embedded sensors immediately track me and create variations in the opening patterns to ensure visibility to the outside. It is the interior logic which is exposed outwards and the outward environmental criteria controls how the internal illumination can be maintained in accordance with the need of the program. This system was done using simulations. If you work together with engineers and combine your skills, then you can surely develop such responsive systems that can work. It no longer stays as a vision but can become a reality.

Multi-Agent Swarm Modelling

Now I am going to talk about something called ‘multi-agent swarm modelling’, or simulation-driven design systems. In this case I was building a parametric logic in town-planning between various program typologies: commerce, green areas, housing zones etc. by specifying how much of these zones – and in what proportion – are needed within a specific site and environmental context? Being a very simple kind of a system, this relational model is constantly trying to balance the manner in which separate programmatic agents can self-organize. Volumetric representations as spherical balls are connected with the manner in which environmental factors of a site result in the width and the depth differentiation of the program. A project in Lagos, Nigeria was done using similar methods in a fully parametric manner. The computational system behind the project was completely developed using Grasshopper. But the interesting thing was the manner in which the internal organisational logic of the project was generated using similar self-organising principles. Because of this, organisational variations were attained in 3 dimensions (in relation with contextual conditions) - the entire geometry of the project thus started evolving from inside out.

Multidimensional Relational Networks

Let us get back to the idea of multidimensional relational networks. The idea stays the same - the points are now conceived as different program agents and are represented as spherical balls and these balls are informing each other about their preferences within an environmental context. I was trying to understand the site itself as a set of heterogeneous parameters. You can read what these parameters are in the upper left corner, and how one can tweak the values per parameter. What is interesting, is that all of this is happening in real-time. In this case, the site is the Delft train station. The train timings, the amount of cycles, the amount of people etc. are all variables. A lot of things are constantly changing and are thus being registered as dynamic data on the site as opposed to me only looking at the site as a passive observer. Here, I am not talking about generating architecture based on studies of architectural façades and scale of the surrounding buildings and thus developing designs which fit in the context, but about the information flow of dynamic entities that is going through the site and the importance of this information for us.

We are working with these kinds of multi-agent based simulations. We are trying to work with parameters such as noise, accessibility, sunlight, visibility etc. In this case, every square meter of the site is constantly capturing such data. We are talking about exhibition halls, gallery spaces, cafeterias, etc. which, as program agents have to negotiate with these dynamic conditions and like a biological process, self-organise in time. These program agents not only self-organise in accordance with environmental contexts that they are embedded in, but also find the most suitable positions in relation to each other. Do not forget that the cafeteria, the toilets and the exhibition hall are also in a relationship with each other. That is just one dimension of relationships. The other, is with the environment, with the noise levels or the accessibility levels etc. These kinds of relationships are thus compounding constantly and are under constant negotiation.

Urban Furniture System

Towards the end I just want to show a prototype of an urban furniture system that has the possibility of changing its form. This is the basic idea of real-time interaction. This object can be monitored in real-time in order to understand how people are interacting with it and to come up with an indication of whether or not it needs another extension, so that people could use it in a different way. The usage pattern of this object is constantly being calculated. The information of any emerging extension of the furniture is streamed directly to our CNC machines and a panel is cut and then we have to go and assemble it on location. The idea of how an inert object, can compute and calculate data in real-time, can become much more interesting, when we start thinking of the evolutionary aspect of this object itself.

In my mind, binding information and matter could be the next step to where we are heading. Instead of buildings only being designed and then left to survive, what if they became processing systems, which also inform us as designers? What new usage pattern can we discover? I think we would slowly start exploring those dimensions.